You open your eyes and adjust to the unfamiliarly bright and sandy scenery. You don’t remember falling asleep….or being knocked unconscious. You do, however, remember that you’re a CEO of a fast growing startup and have a call with the board later in the day. You try to lift a hand to shelter your eyes from the sun as you get your bearings, but meet resistance. You’ve been tied up with rope! As you fidget, you now notice one metal rail digging into your neck and another under your ankles. There’s a blur in the distance and an audible hum. You can’t make it out but the trope is obvious; it has to be a train.

You hear a repeating crescendo of chimes on the left side of your head. Your anonymous captor was kind enough to remove your cellphone and place it next to you. Or was it to taunt you? You can’t see the screen but you know what the display says, “BOARD MEETING PREP CALL W/ DATA TEAM.” You’re now perfectly at peace. These folks are smart, and have sterling credentials to boot. They’ll fix your problem; they always do.

You manage to join the meeting by rubbing your ear against the phone and quickly describe your predicament. Just like any other day in the office, you then quickly commission a series of complex analyses and they happily oblige. One (she, her) of them masterfully pipes the live video from your call, trains a computer vision model that dynamically removes you from the image, which leaves only the desert expanse. She compares it to millions of datapoints from Google Maps, and has a list of places where you’re most likely to be. Another (he, him) scrapes your phone, Slack, and email records1, which confirms the last time you were conscious. He then uses that information against the list of areas he got from the first data scientist, and charts it with a radius around your last location. Within minutes, they’ve successfully pinpointed your location to a two square mile area in Arizona.

Being an obviously train related problem, you assign your last data scientist (they, them) to get access to logs of prior train trips along that route. Summary statistics show that passenger trains are most common, but you didn’t hire them just to do simple analyses. They train2 a predictive model that takes into account time of day, year, and market trends—and conclude that there’s an 87% chance you’re going to get hit by a freight train that shares the same tracks.

“Great work, all! You really are the best,” you say as you start to make out a line of freight cars containing shipping containers as the train rumbles along a curve. They high five each other, knowing they’re still at the top of their fields, and are going to annihilate3 their next performance reviews.

Splat.

This example, although farcical, contains a valuable lesson on data strategy. Data analyses and models that aren’t integrated into decision making processes are not only unlikely to be useful; they can be a death sentence4 for your organization. Let’s examine the diagram of a decision below.

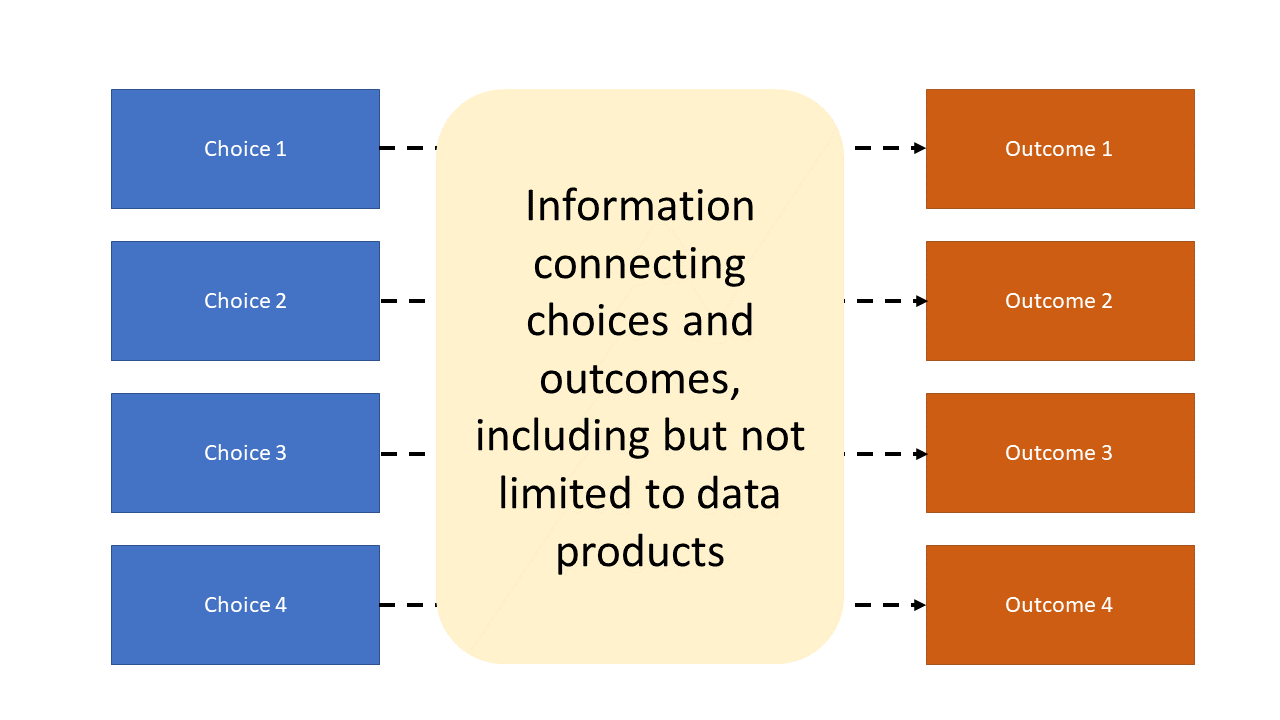

The dashed lines from choice to outcome are discovered through information gathered in the decision making process, which can but does not have to include typical data exercises like summarization, visualization, and modeling. It’s possible you could have gotten out of your predicament with absolutely no data work by doing some wriggling or calling for help.

Requesting data analyses or models related to your problem without plans for how you’ll use that information is a recipe for disaster. Like many bolded passages in blog posts, you might have read the prior sentence and thought, “that’s obvious.” It doesn’t mean that this behavior isn’t extremely common, and my hunch is that it affects both beginner and experienced decision makers. Seeking information that doesn’t actually affect your decision is called information bias. I suspect it happens so commonly in the data space because data summaries, analyses or models related to problems are easy to come up with. The requester is implicitly, and often incorrectly, assuming that he or she will magically know what to do with the results when received. I strongly believe this behavior is a major contributor to why 85% of data projects fail.

Being tied up on the train tracks is an extreme example of a situation in which one can anticipate a negative event. We frequently encounter less extreme examples of this in the business world, for example, knowing we have a large number of subscribers to our product who may churn soon, when their promotional period ends. We could, like the kidnapped CEO in the example above, commission endless reports and analyses on our imminently churning users. However, if they’re not linked to decisions that will help prevent the churn, they don’t accomplish anything. We don’t need to know and understand every detail about the train, real or metaphorical, that’s going to hit us; we only need information that could help save us.

Recall the exercises you had your data scientists do: you had two work together to predict your location and a third figure out the type of train you were most likely to be hit by. I picked these because I want to show that with a little adaptation, your life could have been saved. If the last data scientist figured out not just the type of train, but more specifically which company was most likely to be operating it, they could have been contacted them and requested to stop the train.

In order to avoid these sorts of catastrophes in the future, I propose a data adaptation of a segment of the Gettysburg Address: “Data of decisions, by decisions, for decisions.”

Of: Recognizing that the data we have available to us is the result of prior data strategy decisions. This didn’t show up in my example, but I’ve seen it frequently in the workplace. Data that could best fix a problem won’t be attainable until after the decision needs to be made. Therefore, data strategy decisions about what data to collect, often ones made well in the past, will affect the data we’ll have available to us at decision time. I plan on making this topic the subject of future posts.

By: We first decide which data products (summaries, visualizations, models) should be created in order to inform a decision instead of summarizing, visualizing, and modeling with no goal in mind. If you’re into the business and self help space, this feels like an adaptation of the second habit in The 7 Habits of Highly Effective People, “Begin with the end in mind”.

For: The resulting data product (raw data, analysis, or model) actually affects the decision of interest. If the same decision would have been made regardless of the data product results, then they aren’t necessary to create. I also plan on exploring this idea in future posts.

My hope is that by understanding this framework, organizations and individuals will make better decisions using their data as well as prevent failed data projects.

I started this newsletter in part to encourage more discussions and knowledge sharing around data strategy. I’d love to get your feedback, questions, and comments!

He got a friend in IT to give him access for, uh, business reasons?

I should have ended the post here.

Or here.

Ok I’m done.

I think the "data of decisions" point is probably the most under-appreciated. As my stats professor in grad school put it, "The first step is to count something."